Introduction

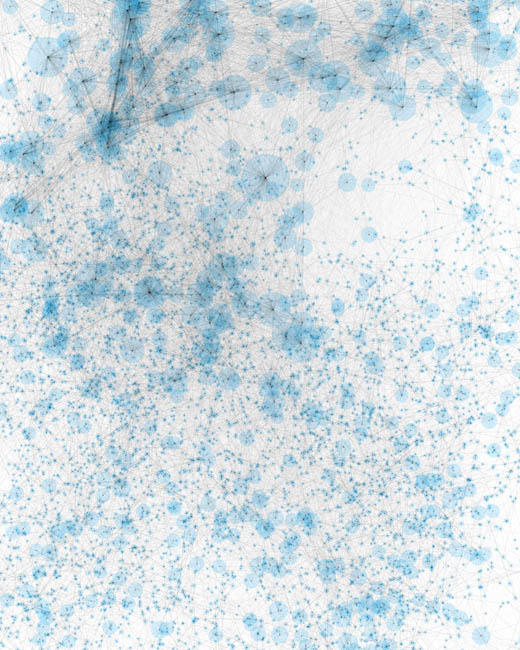

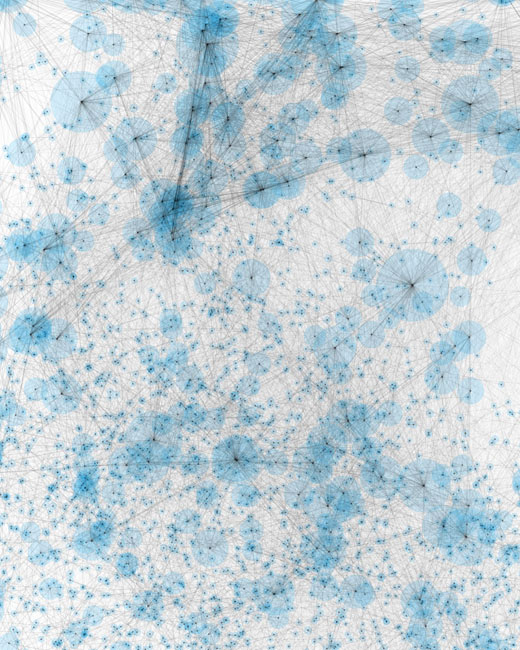

Many modern data sets, even those considered modestly sized, contain hundreds of thousands or even millions of variable pairs—far too many to examine manually. If you do not already know what kinds of relationships to search for, how do you efficiently identify the important ones?

MIC and the MINE family

The maximal information coefficient (MIC) is a measure of two-variable dependence designed specifically for rapid exploration of many-dimensional data sets. MIC is part of a larger family of maximal information-based nonparametric exploration (MINE) statistics, which can be used not only to identify important relationships in data sets but also to characterize them.

A paper describing MINE and applying it to data from global health, genomics, the human microbiome, and Major League Baseball was published in Science Magazine. Subsequent papers improving and characterizing the method have been published in the Journal of Machine Learning Research and the Annals of Applied Statistics.

MINE was developed jointly by David Reshef and Yakir Reshef, working with Professors Pardis Sabeti and Michael Mitzenmacher.